Overview

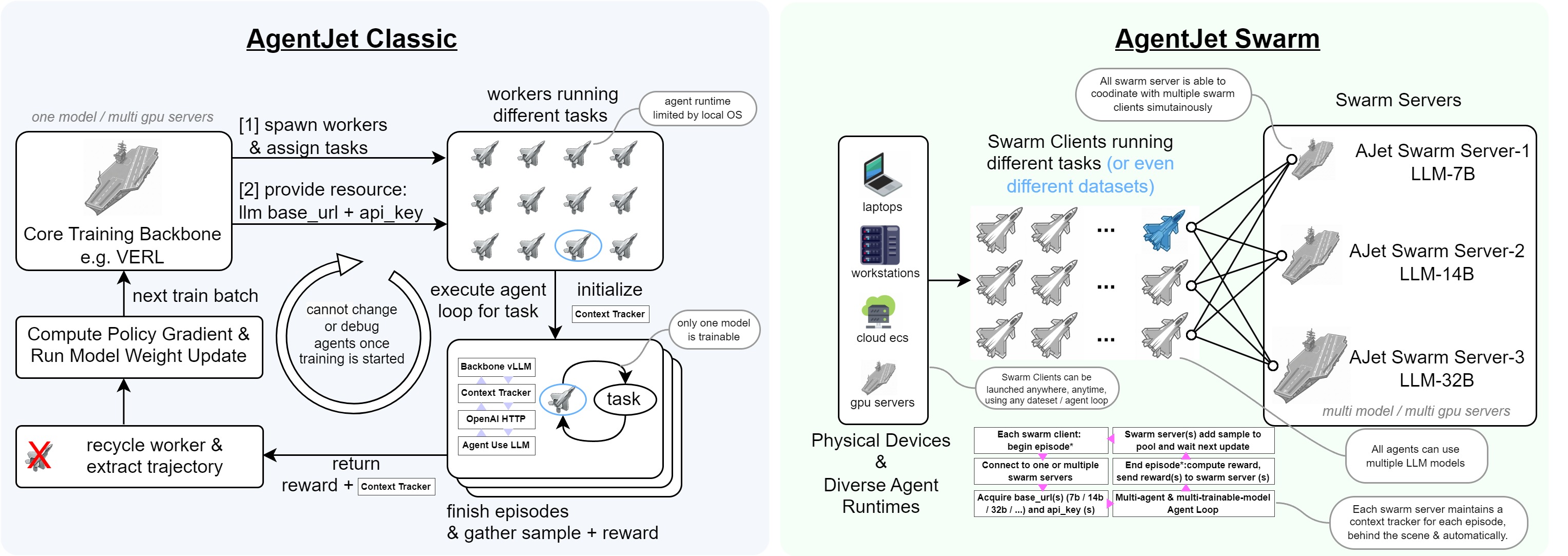

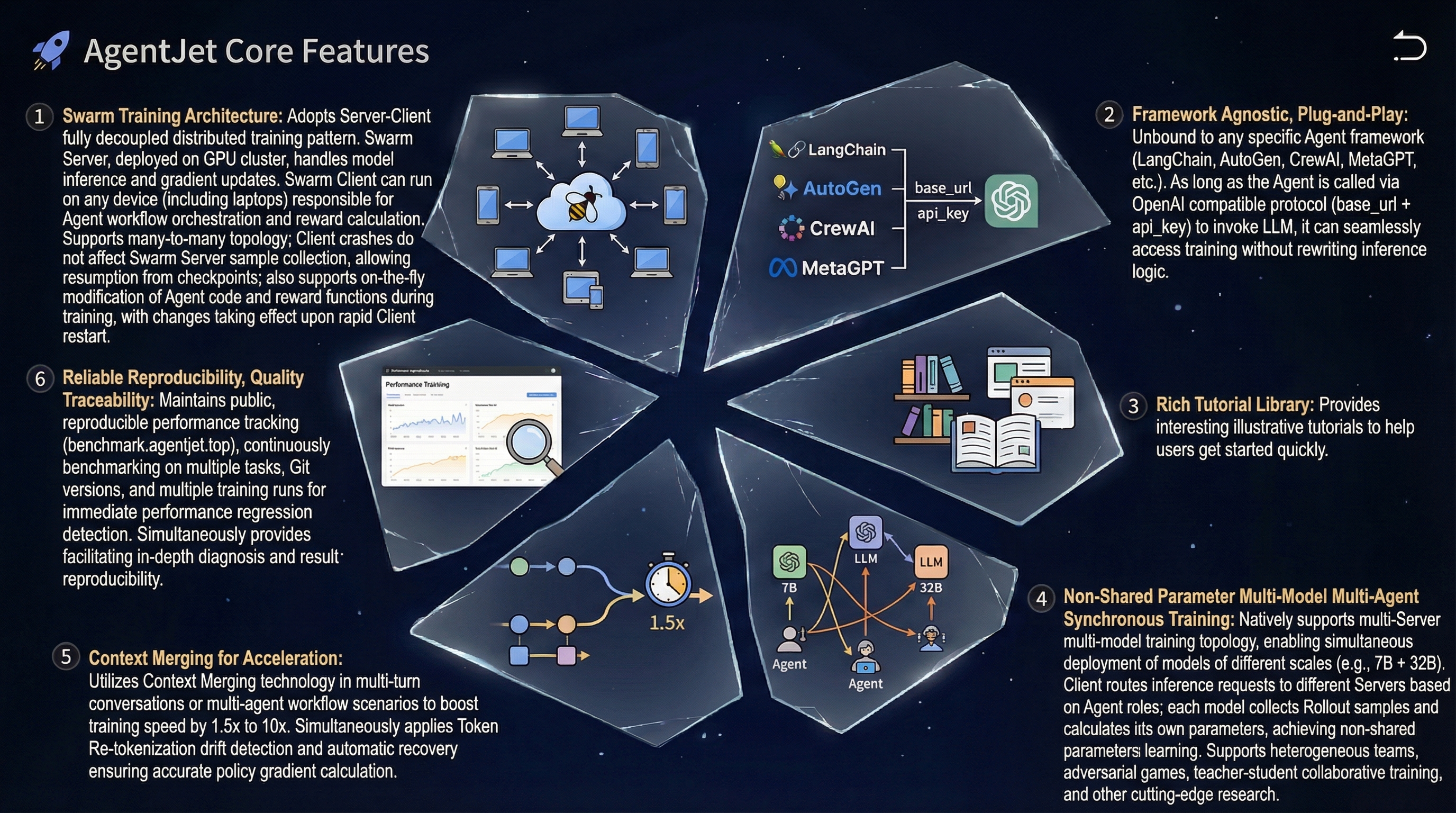

AgentJet (AJet) is a cutting-edge, user-friendly agent RL training framework designed to optimize agents and agentic workflows (supporting any agent built with OpenAI SDK, AgentScope, Langchain, or raw HTTP requests), fine-tuning LLM weights to enhance model performance.

AgentJet (AJet) has fully-distributed swarm training capability, which means that you can deploy ajet-swarm start in GPU server(s) and then start training agents in your laptop(s)! Simply provide your agent workflow, training dataset, and reward function, and AgentJet will be ready to go!

Fast Introduction

Classic Mode

Let's begin with the simplest example: a math agent with a tool call. This is a simple & centralized training example.

- please check out the installation guide to set up the training environment.

- tune your first model using the minimum example.

Swarm Mode

Let's begin with the simplest AgentJet Swarm example: also a math agent. In this case, you can use any GPU-less laptop to train the model remotely.

- Start swarm server and begin swarm overwatch:

ajet-swarm startandajet-swarm overwatch(orajet-swarm top). (Alternative: if you are a fan of docker, use our prebuilt docker image here without setting up dependencies) - From your laptop (or swarm server localhost), run this simple script to begin training:

Key Features

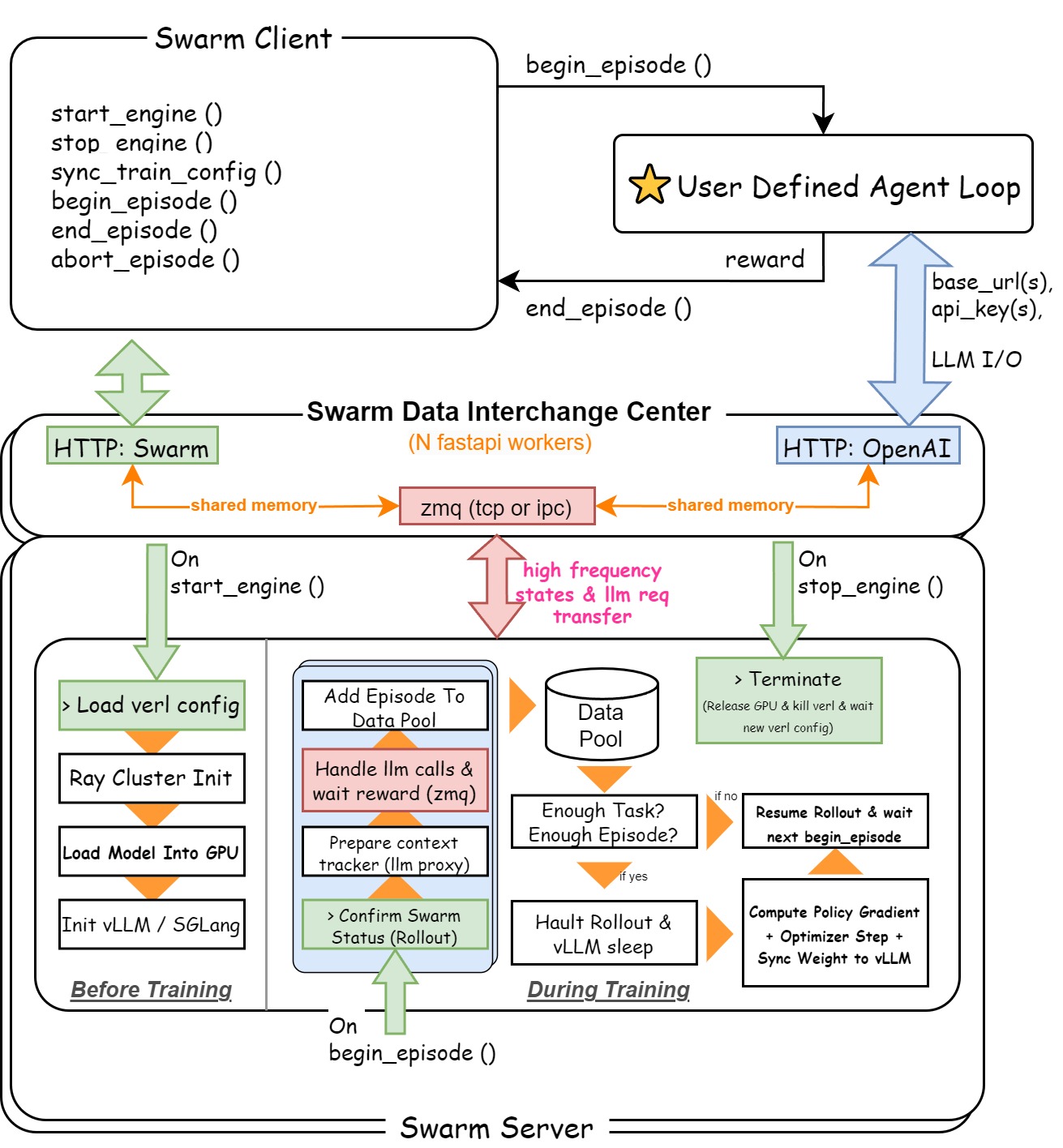

Swarm Training Mode

Swarm Training in AgentJet opens many possibilities: deploying distributed & self-healing rollout workers, non-shared-parameter multi-agent training, multi-runtime & multi-task cocktail training. And just like Tinker, you can use AgentJet Swarm to train models even on GPU-less laptop(s).

Get Started with Ease

AgentJet simplifies the process of tuning the models that power your agent workflows. It supports nearly all major agent frameworks (e.g. agentscope, langchain), as well as framework-less agents built from HTTP requests.

Rich Tutorial Library

Rich examples as beginner's tutorial: math agent, werewolves rpg, appworld ... All with step-by-step guides. Covering various agentic frameworks.

Reliable and Reproducible

Checkout AgentJet's community-powered, robot-assisted open-benchmarking system. Share progress, compare training backbones, discover bugs and iterate faster than ever! Click here to see AgentJet performance across tasks/versions/backbones.

Multi-agent and Multi-turn

Built to support advanced multi-agent and multi-turn LLM workflows, AgentJet integrates timeline-merging algorithms that automatically analyze and consolidate each agent's LLM timeline, accelerating training speed 1.5x ~ 10x.

High Resolution Logging

Log token-level rollout details, capturing token IDs, token loss masks, and token log probabilities with web UI display. This supports workflow development, agent diagnostics, and facilitates research on advanced LLM algorithm studies.

Example Library

Explore our rich library of examples to kickstart your journey:

Math Agent

Training a math agent that can write Python code to solve mathematical problems.

AppWorld Agent

Creating an AppWorld agent using AgentScope and training it for real-world tasks.

Werewolves Game

Developing Werewolves RPG agents and training them for strategic gameplay.

Learning to Ask

Learning to ask questions like a doctor for medical consultation scenarios.

Countdown Game

Writing a countdown game using AgentScope and solving it with RL.

Frozen Lake

Solving a frozen lake walking puzzle using AgentJet's reinforcement learning.

Project Structure

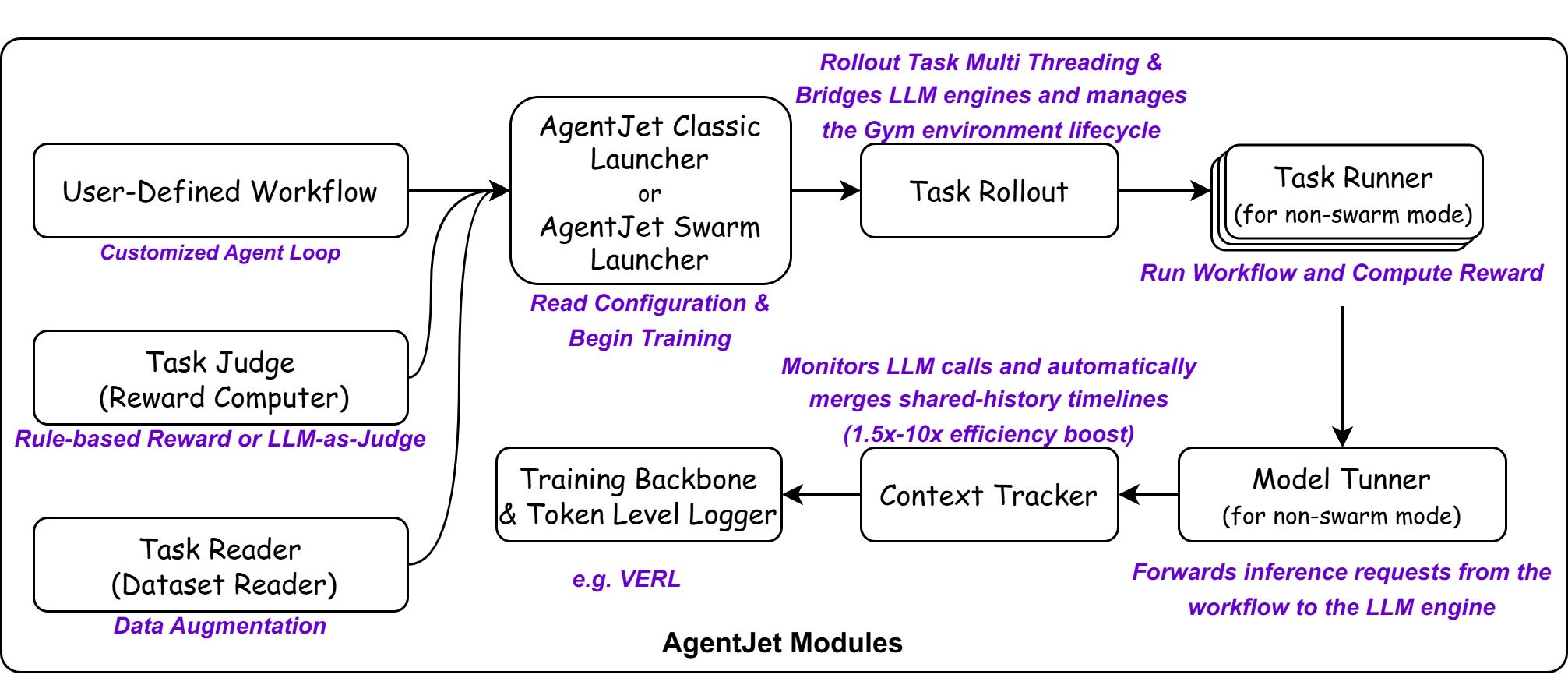

AgentJet makes agent fine-tuning straightforward by separating the developer interface from the internal execution logic.

Basic Modules

To optimize an agent, you provide three core inputs:

Trainable Workflow

Define your agent logic by inheriting the Workflow class, supporting both simple and multi-agent setups.

Task Reader

Load training tasks from JSONL files, HuggingFace datasets, or auto-generate from documents.

Task Judger

Evaluates agent outputs and assigns rewards to guide the training process.

The internal system orchestrates several specialized modules to handle the complexities of RL training and agent interactions.

| Module | Description |

|---|---|

| Launcher | Manages background service processes (Ray, vLLM) and routes the backbone |

| Task Rollout | Bridges LLM engines and manages the Gym environment lifecycle |

| Task Runner | Executes the agent workflow and calculates rewards |

| Model Tuner | Forwards inference requests from the workflow to the LLM engine |

| Context Tracker | Monitors LLM calls and automatically merges shared-history timelines (1.5x-10x efficiency boost) |

| Swarm Server | A data interchange center that accepts OpenAI-like requests and engine instructions, activated only in AgentJet Swarm mode |

Swarm Architecture

When swarm training mode is enabled, an additional component will be activated:

- Swarm Data Interchange Server: Maintains HTTP service, listens to swarm instructions and OpenAI compatible requests. Establishes a high-speed zmq communication channel to coordinate other modules.

Navigation

- Tutorials: From Installation to Tuning your first agent — the essential path for beginners.

- Core Components: Define your Classic Workflow or Swarm Workflow, and manage Data and Reward.

- Example: Check the Example Library above for real-world cases like Math, Werewolves game and Learning to ask task.

- Deep Dive: Master advanced Configuration.

Roadmap

AgentJet is a constantly evolving project. We are planning to add the following features in the near future.

| Category | Feature | Status |

|---|---|---|

| Examples | Add LoRA training examples | Todo |

| Infra | Optimize configurations for long-context adaptation on smaller GPUs | In Progress |

| Capability | Multi-modal training support | Todo |

| Capability | MARL Credit assignment | Todo |

| Capability | Training dataset generation from few-shot samples | Todo |

Citation

If you use AgentJet in your research, please cite:

@software{

title = {AgentJet: A Cutting-Edge Multi-Agent Training Platform for Large Language Models.},

author = {The AgentJet Team},

url = {https://modelscope.github.io/AgentJet/},

month = {01},

year = {2026}

}

Next Steps

Installation

Set up AgentJet environment and dependencies.

Quick Start

Run your first training in minutes.

First Agent

Build and train your own agent from scratch.

Examples

Explore detailed training examples.

[⭐ Star Us](https://github.com/modelscope/AgentJet) · [Report Bug](https://github.com/modelscope/AgentJet/issues) · [Request Feature](https://github.com/modelscope/AgentJet/issues)

Join AgentJet DingTalk Group to share your idea